Once upon a time, when multicore processors were novelties, multicore was motivated by the simple fact that it was impossible to keep raising the clock frequency of processors. More “clocks” simply would result in an overheated mess. Instead, by adding more cores, much more performance could be obtained without having to go to extreme frequencies and power budgets. The first multicore processors pretty much kept clock frequencies of the single-core processors preceding them, and that has remained the mainstream fact until today. Desktop and laptop processors tend to stay at 4 cores or less. But when you go beyond 4 cores, clock frequencies tend to start to go down in order to keep power consumption per package under control. A nice example of this can be found in Intel’s Xeon lineup.

Earlier this year, Intel released the Broadwell-based Xeon E5 v4 range of processors, and I am going to take a look at it from a clocks vs cores perspective. Disclosure: I work for Intel, and this text just represents my own personal analysis based on public data.

My interest in clocks vs cores is based on my work with Simics – Simics is the kind of program that likes high clock frequencies and robust cores. Using more cores requires target systems with available parallelism, or that independent runs are made on the same machines. Then the question becomes – what is the best Xeon to buy for a server to run Simics? What would give you the best performance? And that leads to a closer look at the Xeon line-up.

The Xeon range is interesting to look at how clocks compare to cores, since it is a broad range of processors built in the same technology and using the same components. If I were to try to look at clocks vs cores by comparing processors from different vendors, there would be many more variables in play. The same trend does hold true throughout the industry – the highest clock frequencies come with the lowest core counts, but the details vary considerably.

With the Xeons, we get a pretty nice data set. The power budget does vary across the range, but it is clear that there is an upper limit at 145W (with exception of a workstation chip that can do 160W).

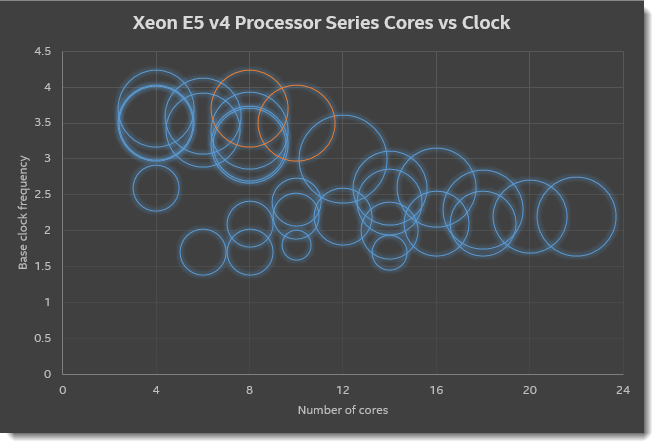

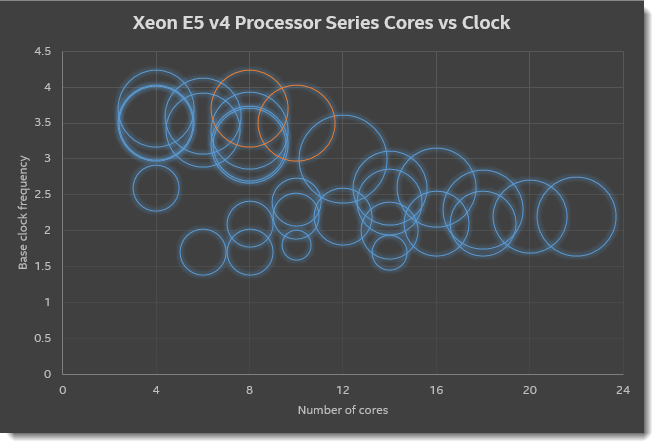

I plotted the core count vs clock frequency, based on public data from ark.intel.com. The size of the circle represents the TDP of the chip. The core count and clock frequency are found in the middle of the circle. The two red circles represent the latest Broadwell Core i7 Extreme processors. They fit right in with the server chips that they are closely related to.

It is clear to see that as core counts go up, the base clock frequency goes down. The highest clocks are found in the single-socket 1600 series of chips, where you have from 4 to 8 cores, and clock frequencies up to 3.70 GHz for the 4-core variants. It is worth noting dual-socket chips have slightly lower clock frequencies than the single-socket variants with the same number of cores.

The most extreme scale-out chip (the E5-2699) on the right gives you 22 cores (that’s 44 threads, an insane amount!) but “just” 2.2 GHz in base clock frequency. If I were to spend the same amount of power on a 4-core E5-2637, it would run at 3.5 GHz base clock, more than 50% higher. The aggregate performance of the scale-out chips is clearly much higher. If you just multiply core count by clock frequency it is a factor of more than 3, but for a workload that does lots of independent parallel processing, I think might actually be even more than that.

For a general-purpose Simics server, I would definitely go with the lower core count machines. There are some interesting trade-offs between single-socket and dual-socket, the E5-1680 gives you 8 cores at 3.40 GHz, almost matching getting two E5-2637. Or get a Broadwell Core i7 Extreme with 8 cores. However, for a parallelizable workload where you need maximum throughput, the E5-2699 is the thing to beat. With two of these on a motherboard, you get 88 threads of execution. I used the previous generation E5-2699 for some large-system simulation experiments, and it is kind of cool to see a simulation run on 50+ cores at the same time. At that point, there is clear benefit to having more cores. There is also the fact that if only a few cores are being used, the E5-2699 is capable of catapulting individual cores up to 3.70 GHz turbo clock! That provides a nice flexible function in the hardware – it can basically behave like a low-core-count machine when there is a workload that requires it.

Another interesting I saw in the diagram is that there are some low-power (small circle) chips that trade just a little clock frequency for fairly substantial savings in power. The E5-2630L at 55W for 10 cores really stands out, in particular.

2 thoughts on “Clocks or Cores? Choose One”