A blog post from Undo Software informed me that Microsoft has rather quietly released a reverse debugger tool for Windows programs – WinDbg with Time Travel Debug. It is available in the latest preview of WinDbg, as available through the Windows Store, for the most recent Windows 10 versions (Anniversary update or later). According to a CPPcon talk about the tool (Youtube recording of the talk) the technology has a decade-long history internally at Microsoft, but is only now being released to the public after a few years of development. So it is a new old thing 🙂

A blog post from Undo Software informed me that Microsoft has rather quietly released a reverse debugger tool for Windows programs – WinDbg with Time Travel Debug. It is available in the latest preview of WinDbg, as available through the Windows Store, for the most recent Windows 10 versions (Anniversary update or later). According to a CPPcon talk about the tool (Youtube recording of the talk) the technology has a decade-long history internally at Microsoft, but is only now being released to the public after a few years of development. So it is a new old thing 🙂

Updated: On 2017-10-09, the day after I released the first version of this blog I found lots of additional information on WinDbg TTD. The basic approach is described – along with the fact that it is being used for TTD – in a paper by Bhansali et al from VEE 2006. Robert O’Callahan, the man behind RR, posted an analysis on his blog. The talk appeared on Youtube as well. Thus, much of the text is being changed from the first version that I released. Some of it marked with “update”, but the changes are really quite pervasive. So just re-read the whole thing.

Classification and scope

The documentation for WinDbg TTD that Microsoft has made available on MSDN does not explain how things work in any kind of depth, but they do give some hints as to what is going on under the hood. By reading the various limitations and possible operations, and looking at what you can do in the debugger itself, I worked out what I would hope is a reasonable idea for the actual nature of the implementation.

It appears to be the case that WinDbg TTD covers:

- User-level code (but not kernel code or drivers or BIOS).

- Compiled C/C++/presumably anything code (not .net code running on CLR, Java, etc.).

- Windows host (which is just like the many Linux-only tools, since user-level recording is OS-dependent by nature).

- Intel Architecture (x86) host.

- Can handle code-generating runtimes like the .net CLR, JVM, etc., as well as self-modifying code (which is necessary on x86).

- Multithreaded programs (which are pretty much unavoidable on Windows) running in parallel.

- Not able to go back in time and change the state to take a different execution path (which is the most common case).

- Not able to call functions in the debugged program in rr style.

It is not clear how threads are handled – I would assume they get serialized on a single execution thread, but I am not sure that is the case. Update. Threads are handled by recording them all running in parallel, using a binary translation approach (as described in the 2006 VEE paper) to get full insight into all instructions and all memory accesses. Thus, it is possible to record parallel threads running in parallel without imposing any kind of serialization, by increasing the recording overhead. It would seem to be similar to what the DrDebug tool built on Pin does (see http://jakob.engbloms.se/archives/2259, some ways down).

The implementation is based on record-replay, with some reconstruction of state from a sparse representation, but short of the virtual machine/virtual platform full reconstruction using execution. The replay is done using the same binary translation engine as the recording, which is given the code to execute and initial register values. Any reads of unpredictable values (i.e., not written by the running code in the snippet) are taken from the trace file. Note that the trace file actually includes a copy of the target program binary.

There is mention of the emulator-based approach in the docs – it is found in a note about “derailment”, where the reverse debugger realizes that it has created an inconsistent current state of the program during reverse. From the TTD docs:

“TTD works by running an emulator inside of the debugger, which executes the instructions of the debugged process in order to replicate the state of that process at every position in the recording. Derailments happen when this emulator observes some sort of discrepancy between the resulting state and information found in the trace file.”

The position in the code is expressed in a way that is rather unusual. All other reverse debuggers that I can remember seeing using some kind of linear time concept that basically increments as the program progresses. WinDbg has a two-part “position” identifier concept that consists of a “sequencing event” number, and a “step” count. Such positions are maintained separately for each thread in a program. Presumably, the recording is synchronized on the sequencing events.

Record, Replay, Re-Execute a Bit

The recording engine for WinDbg TTD is derived from or based on the Nirvana binary translation engine presented in a 2006 paper: “Framework for Instruction-level Tracing and Analysis of Program Executions“, from the Virtual Execution Environments (VEE) 2006 conference. The Nirvana engine works together with the iDNA tracing system to record a parallel program in parallel – and later replay each thread’s execution just like it was in the recording.

The key to making this work efficiently and without gigantic trace files is to only record the memory values that cannot be reconstructed by running the code. Thus, we have a mix of re-execution and trace-based reverse debug, where the re-execution engine is used for short sequences of instructions, before getting the state forced back to the trace as needed.

When recording parallel threads, there is a coarse-grained notion of time across threads to make sure they do not drift apart too far – even though the technology used means that each thread can basically be replayed in isolation. It is very useful to be able to look at execution across multiple threads, and therefore there are sequence points that are used to sync up time across threads when operations happen that would tend to make threads exchange data. This makes it possible to see the effect of synchronization operations and memory barriers on the progress of a program as a whole. Thanks to the binary translation approach, it is easy to spot and handle memory barriers, lock-prefix, and xchg instructions.

The “key frames” used in the time concept are more like checkpoints in Simics reverse debugging, in that they represent a complete state across all threads. It makes it easy for the debugger to jump to certain points in the execution and set up a consistent state across the threads there. Neat and useful.

Execution is interrupted for events like system calls, and then the results of the call is recorded in the trace and execution restarted after the call.

In the talk from CPPcon, they point out that all I/O is suppressed during trace replay. Makes sense for network traffic, but there is some logic to want to see program console output or Window system updates during the replay debug session. We did achieve this in Simics, but that required storing the visible state of the target consoles as part of the revexec state. Still, it conceivable to jump back in time and then execute a series of system calls to draw things as part of moving forward towards a certain point in time. But for an approach like WinDbg where we use jumping around to various points in time as an important navigation mechanism, I can see why turning off I/O is the most robust and reasonable solution.

Sharing

The WinDbg approach allows you to share a recording with other users, without them having the original program installed. This is done by basically including the program code and initial state in the trace file (from the docs):

TTD trace files can be shared with others by copying the .RUN file. This can be handy for having a coworker help you figure out the problem. They don’t need to install the crashing app or do any other related setup to attempt to reproduce the issue. They can just load the trace file and debug the app as if it was installed on their PC.

It is not clear how to deal with the debug information for the program – if that is implicitly embedded into the .RUN file. It would seem to be rather necessary to make sense of the shared recording. As discussed in my previous blog post on the rr reverse debugger, cross-host replay indicates that a rather heavy implementation is being used for the recording and replay – while staying on the same host allows for a lighter-weight implementation. WinDbg would seem to be on the same level as UndoDB in terms of portable traces, while rr sticks to replaying on the same machine in order to optimize for performance.

Microsoft appears to be saying they use this ability to pull in bugs from users for analysis, without having users give up their complete software.

Interface

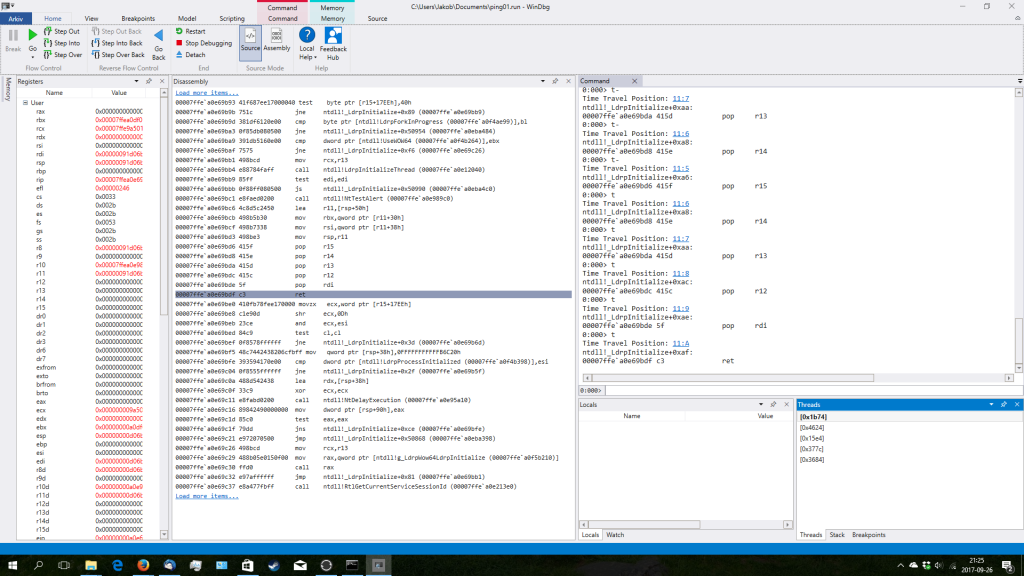

The new WinDbg Windows Store interface is pretty slick. I downloaded the application, and tried on the standard Windows ping.exe binary. It let me single-step backwards and forwards, and switch between threads. There is clearly a rich recording of events like OS calls and their results, as well as function calls and returns making for a robust and impressively smooth experience. Then again, I did not try anything particularly complicated, but what I saw looked really good for a preview version.

One nice touch in the GUI is the way the application goes out of its way to explain what is going on. The backwards buttons have tooltips on them that link to the online documentation, to make it very easy for users to learn more about the feature. Good thinking!

Query coolness

A really clever part of the user interface and underlying technology is that WinDbg turns the program trace into a database that can be queried using LINQ queries. This has been done before, but this implementation seems pretty deep. It takes reverse debugging one step beyond the classic breakpoint – it provides new ways to think about debugging. Instead of setting a breakpoint on some event and going backwards in time to find it, you can ask the recording for when it happened.

The events available appear to be high-level events that do not happen on every instruction. Such as exceptions, module loads, and thread creation. The most granular event listed in the documentation is the function call – which would seem quite useful indeed. Still, this means that a lot of information has to be stored, and many different indexes built to allow different types of queries – the WinDbg documentation mentions that the indexing file for a run might be twice as big as the underlying trace file.

However, note that the events do not replace instruction execution and memory access breakpoints. Indexing all instructions would seem a rather expensive operation indeed, and just doing the classic backwards iterative re-execution is likely just as fast.

Practical Issues

The overhead of the recording is reported as being in the 10x to 20x overhead range. Not too bad, and apparently lower for programs that spend less time computing and more time being interactive and idle – sounds like it might work well for typical productivity software… Replay overhead is unclear, but they claim to have improved it by a factor of 10 over the original approach (which has to be the 2006 VEE paper). In the 2006 paper, the replay speed was 3x to 4x slower than the tracing speed, putting it upwards of 100x from the native program execution. With this optimization, it would seem replay is now at 10x slow-down compared to native or less. Reasonable enough.

Another question that I have which does not seem to be answered in the available information is just which x86 ISA variant is supported. Since the core technology is an interpreter and binary translator, it will only support a certain set of instructions. Which ISA level is that? For example, does it handle new instructions like AVX?

TTD? Reverse?

It seems that time-traveling debug or time travel debugging is becoming more common as a term (again). Still, most tools and companies and projects still talk about reverse debugging. Undo, RR, gdb, and Simics all use “reverse” to describe the feature. If I look at the history of the field as far as I have been able to trace it, most people call it reverse until the a 2005 paper introduces “time-traveling virtual machines”.

For more on the history of reverse debuggers, see my three-part series of blogs from 2012 that I have since updated as new products and tools have appeared or been found in the archive of history: history 1, history 2, history 3.

Just a quick clarification, Microsoft TTD does not serialize thread execution. All threads are traced in parallel.

Thanks for the clarification, Tim! Any pointers as to how that is done, and how it relates to replay?

A paper was published in 2006 that describes how this can be done (this was for the original version of time travel debugging at Microsoft): https://www.usenix.org/legacy/events/vee06/full_papers/p154-bhansali.pdf

Some things have changed since then to do this even more efficiently, but the fundamental ideas haven’t changed.

For the record, we recently added to rr the ability to record on one machine and replay on another … if you have an up-to-date Linux kernel. See http://robert.ocallahan.org/2017/09/rr-trace-portability.html