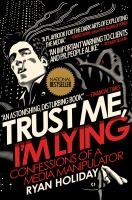

Trust Me, I’m Lying – Confessions of a Media Manipulator by Ryan Holiday is a brilliant book about the online media landscape, and how it is driving public discourse in a very bad direction. Ryan has a very interesting background, having worked in marketing and being part of the problem he describes. In his work, he has exploited the weaknesses of the new media landscape to get stories into blogs, press, and often national television. Stories about his clients, to get them attention and ultimately business. In this book, he describes what he did, how he did it, and why we as a society have a big problem. It has changed the way I read online media, and made me a lot more critical of things I previously did not take notice of.

Trust Me, I’m Lying – Confessions of a Media Manipulator by Ryan Holiday is a brilliant book about the online media landscape, and how it is driving public discourse in a very bad direction. Ryan has a very interesting background, having worked in marketing and being part of the problem he describes. In his work, he has exploited the weaknesses of the new media landscape to get stories into blogs, press, and often national television. Stories about his clients, to get them attention and ultimately business. In this book, he describes what he did, how he did it, and why we as a society have a big problem. It has changed the way I read online media, and made me a lot more critical of things I previously did not take notice of.

Tag: blog commentary

Grant Martin on the “Verification is 70% of the Effort” Claim

Over at Taken for Granted, Grant Martin just did a very good write-up on the “accepted fact” that verification is seventy percent of a chip design effort. It is not exactly easy to prove this point, but is it really just an urban myth that has gained credibility by being repeated over and over again?

Go over there to see what he has to say.

Cadence-Ran vs Synopsys-Frank over Low-Power and Virtual Things

Over the past few weeks there was a interesting exchange of blog posts, opinions, and ideas between Frank Schirrmeister of Synopsys and Ran Avinun of Cadence. It is about virtual platforms vs hardware emulation, and how to do low-power design “properly”. Quite an interesting exchange, and I think that Frank is a bit more right in his thinking about virtual platforms and how to use them. Read on for some comments on the exchange.

Continue reading “Cadence-Ran vs Synopsys-Frank over Low-Power and Virtual Things”

Cadence on Virtual Prototypes instead of Host Execution

Cadence technical blogger Jason Andrews wrote a short piece a couple of days ago on his perception that host-based execution is becoming unncessary thanks to fast virtual platforms. In “Is Host-Code Execution History“, he tells the story of a technique from long time ago where a target program was executed directly on the host, and memory accesses captured and passed to a Verilog simulator. The problem being solved was the lack of a simulator for the MIPS processor in use, and the solution was pretty fast and easy to use. Quite interesting, and well worth a read.

However, like all host-compiled execution (which I also like to call API-level simulation) it suffered from some problems, and virtual platforms today might offer the speed of host-compiled simulation without all the problems.

Continue reading “Cadence on Virtual Prototypes instead of Host Execution”

Simon Kågström, PhD

Yesterday, I had the honor of being the opponent at the PhD defense of Simon Kågström at Blekinge Tekniska Högskola (BTH, Blekinge University of Technology in English). His PhD thesis deals mainly with the multiprocessor port of an industrial in-house operating system, and a secondary theme was the design of the Cibyl C-programs-to-JVM translator. All of his papers are very well-written and a joy to read, and the engineering work behind it is very solid.

Yesterday, I had the honor of being the opponent at the PhD defense of Simon Kågström at Blekinge Tekniska Högskola (BTH, Blekinge University of Technology in English). His PhD thesis deals mainly with the multiprocessor port of an industrial in-house operating system, and a secondary theme was the design of the Cibyl C-programs-to-JVM translator. All of his papers are very well-written and a joy to read, and the engineering work behind it is very solid.

The most important data in the PhD thesis is really just how much work it is to do an SMP port of an OS kernel. And how hard it is to get performance up to good levels even with several years of work. Really emphasizes the point that hard work and perseverance and just lots of calendar time is what it takes to create a good SMP OS. That’s why Solaris and AIX are still years ahead of Linux in this respect — you just need to hit the snags, fix them, retest, and hit the next snag. It takes time to polish, basically.

So, if you have any interest in multiprocessor operating systems, Simon’s work is well-worth a read. Also check out his blog at http://simonkagstrom.livejournal.com/. And by the way, he did pass.

Grant Martin on Manycore Multicore MPSoC AMP SMP Multi-X…

Grant Martin is a nice fellow from Tensilica who has a blog at ChipDesignMag. In a recent post, he raises the question of nomenclature and taxonomy for multicore processor designs:

…the discussion, and the need to constantly define our terms (and redefine them, and discuss them when people disagree) makes me wish that the world of electronics, system and software design had some agreement on what the right terms are and what they mean…

I think this is a good idea, but we need to keep the core count out of it…

Continue reading “Grant Martin on Manycore Multicore MPSoC AMP SMP Multi-X…”

Sun buys Montalvo

Sun just bought Montalvo whose hardware I blogged about some while ago. And just like the Apple acquisition of PA Semi, the question of “why” appears. Some analysts blame the simple fact that both Montalvo and PA Semi simply needed to be acquired, since their venture capitalists did not want to put in the next 100 million USD needed to go to silicon (Montalvo) or really expand on the opportunity already at hand (PA Semi). Here is my crazy guess.

Linux KVM for IBM Mainframes

There was an interesting little note at the CodeMonkey blog… basically, the Linux kvm kernel hardware virtualization support system now works on IBM z series mainframes. Using the z architecture virtualization support in hardware. Nice to see some attention being put on non-x86 architectures. And a nice historical note that current x86 virtualization extensions were indeed inspired by the s/370 architecture from the mid-1970s. Cool.

There was an interesting little note at the CodeMonkey blog… basically, the Linux kvm kernel hardware virtualization support system now works on IBM z series mainframes. Using the z architecture virtualization support in hardware. Nice to see some attention being put on non-x86 architectures. And a nice historical note that current x86 virtualization extensions were indeed inspired by the s/370 architecture from the mid-1970s. Cool.

Off-Topic: Studying Malware Analysis at HUT.fi

The F-Secure weblog is one of my regular reads, and today they presented one of the coolest industry-academia items for a long time: F-Secure are teaching an entire course at the Helsinki University of Technology, called “Malware Analysis and Antivirus Technologies”. Kudos to F-Secure for the time and money that must have gone into doing that!

Blog tip: The Wonderful World of Early Computing

There is a nice blog post over at Neatorama with many pictures of early computers. The material is nothing new to someone familiar with computing history, but the pictures collected are very nice indeed.

Brilliant Virtualization Comic

I’ve never seen the comics at xkcd.com before, but they are really quite brilliant nerdy comics. Liking virtualization and simulation, I found number 350 at http://xkcd.com/350/ especially fun. And note that that is what some serious researchers are doing, using virtual machines as active honey pots (“honey monkeys“) to go out and contract infections by actively searching the web with machines in various stages of patching.

I’ve never seen the comics at xkcd.com before, but they are really quite brilliant nerdy comics. Liking virtualization and simulation, I found number 350 at http://xkcd.com/350/ especially fun. And note that that is what some serious researchers are doing, using virtual machines as active honey pots (“honey monkeys“) to go out and contract infections by actively searching the web with machines in various stages of patching.

Multithreading Game AI

Over at an online publication called AI Game Dev, there is an elucidating post on how to do multithreading of game AI code (posted in June 2007). Basically, the conclusion is that most of the CPU time in an AI system is spent doing collision detection, path finding, and animation. This focus of time in a few domain-given hot spots turns the problem of parallelizing the AI into one of parallelizing some core supporting algorithms, rather than trying to parallelize the actual decision making itself. The key to achieving this is to make the decision-making part able to work asynchronously with the other algorithms, which is not trivial but still much easier than threading the decision making itself. The threading of the most time-consuming parts turns into classic algorithm parallelization, which is more familiar and easier to do than threading general-purpose large code bases. A good read, basically, that taught me some more about parallelization in the games world.

Over at an online publication called AI Game Dev, there is an elucidating post on how to do multithreading of game AI code (posted in June 2007). Basically, the conclusion is that most of the CPU time in an AI system is spent doing collision detection, path finding, and animation. This focus of time in a few domain-given hot spots turns the problem of parallelizing the AI into one of parallelizing some core supporting algorithms, rather than trying to parallelize the actual decision making itself. The key to achieving this is to make the decision-making part able to work asynchronously with the other algorithms, which is not trivial but still much easier than threading the decision making itself. The threading of the most time-consuming parts turns into classic algorithm parallelization, which is more familiar and easier to do than threading general-purpose large code bases. A good read, basically, that taught me some more about parallelization in the games world.

Mark Nelson’s Multicore Non-Panic and Embedded Systems

Via thinkingparallel.com I just found an interesting article from last Summer, about the actual non-imminence of the end of the computing world as we know it due to multicore. Written by Mark Nelson, the article makes some relevant and mostly correct claims, as long as we keep to the desktop land that he knows best. So here is a look at these claims in the context of embedded systems.

Continue reading “Mark Nelson’s Multicore Non-Panic and Embedded Systems”

FTF Paris: Debug connections threat to secure network devices

In a report from FTF Paris 2007, Info World makes some interesting comments on security and locking-down of mobile devices. Info World » Blog Archive » ‘Flat IP’ mobile networks face new security challenges:

Continue reading “FTF Paris: Debug connections threat to secure network devices”

Power.Org Dev Con: C Domination a Problem for Multicore

I just read a EETimes report from a panel at the Power.org Developers Conference (actually, it is more accurately called the Power Architecture Developers Conference, of PADC), about programming multicore processors for the embedded market. Note that I was not there in person, so I can only take the few quotes in the article and comment on them. The main conclusions are that:

- C/C++ is going to be the dominant language for embedded for the near future. Nothing really surprising at that.

- C/C++ being dominant means that parallelism in multicore processors, especially shared-memory systems, will be harder to exploit. That is certainly true.

- Tool vendors have no good idea about what to do next.

- You cannot expect to get traction with a new language.

In a sense, blaming the market for not having the good sense to adapt new tools to tackle multicore.

I don’t think things have to be that bleak.

Continue reading “Power.Org Dev Con: C Domination a Problem for Multicore”